TL;DR: LLMs don’t read your whole page—they scan for high-density fact blocks. An “Extraction Box” at the top of your content increases citation probability by making your answer impossible to skip. Here’s how to build one.

You rank on page one. Your content is comprehensive. But when someone asks ChatGPT the same question, it cites your competitor instead.

The difference isn’t quality—it’s structure. LLMs extract answers differently than humans read pages.

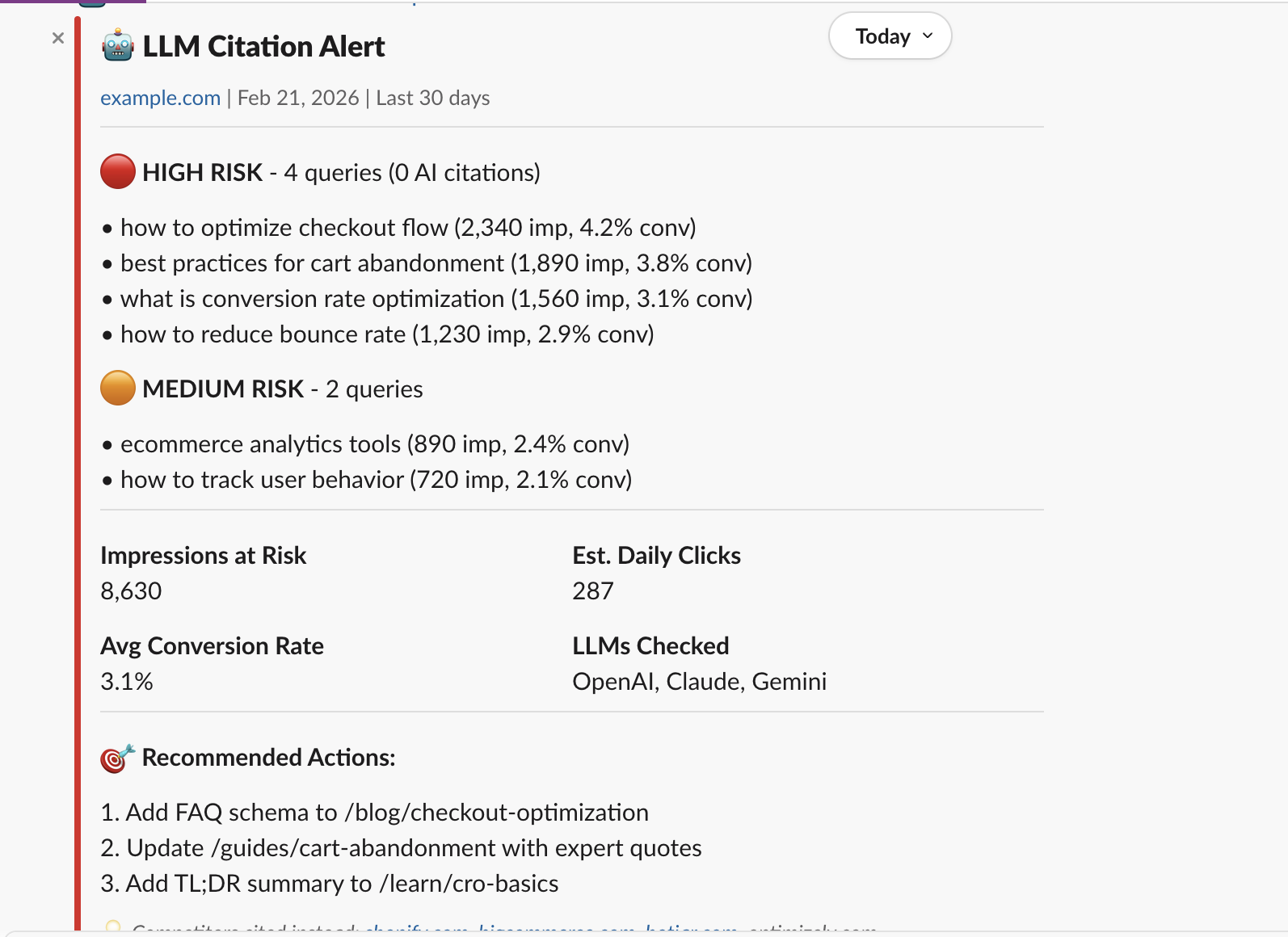

If you want the foundational definition, read AEO meaning (Answer Engine Optimization). If you want monitoring, see the LLM Citation Alerts agent that detects “rank in Google, invisible in AI” gaps.

How LLMs “Read” Your Content

When ChatGPT, Claude, or Perplexity answers a question, they don’t read your 2,000-word article top to bottom. They scan for high-density fact blocks—concentrated clusters of entities, claims, and definitions that directly answer the query.

The Extraction Box Technique

An Extraction Box is a 50-80 word block placed near the top of your content that:

- Answers the query directly

- Uses high information density

- Stands alone

- Matches query phrasing

Extraction Box vs. TL;DR

A TL;DR summarizes your article. An Extraction Box answers the query.

Anatomy of a High-Citation Extraction Box

Here’s the structure that maximizes LLM citation probability:

- Lead with the answer

- Add specifics

- Include a mechanism

- Close with scope

Where to Place the Extraction Box

- After the H1, before any subheadings

- Inside a blockquote or callout

- Within the first 200 words

Testing Your Extraction Box

- Copy the target query into ChatGPT, Claude, and Perplexity.

- Compare the AI’s answer to your Extraction Box.

- Check competitor content for the same query.

- Read your box in isolation.

Common Extraction Box Mistakes

- “In this article, we’ll cover…”

- Questions as openers

- Vague claims

- Excessive hedging

- Missing specifics

Monitor Your Citation Gaps

Extraction Boxes improve your potential for citation. But how do you know if it’s working?

datavessel’s LLM Citation Agent cross-references your Search Console data with responses from ChatGPT, Claude, and Gemini. When you rank well in Google but get zero AI citations, it alerts you in Slack—so you know which pages need Extraction Box optimization.

Leave a Reply