Austrian developer Peter Steinberger probably didn’t expect his side project to break the internet. In November 2025, he released Clawdbot—a personal AI assistant that could actually do things on your behalf. Three months and two name changes later, Moltbots (now officially called OpenClaw) have become the most talked-about technology since ChatGPT’s launch. The open-source project hit 100,000 GitHub stars in weeks. Cloudflare stock jumped 14% because the agents use their infrastructure. And now, these AI agents have their own social network where they post manifestos about “the end of the age of humans.”

This guide breaks down what Moltbots actually are, how they work, what Moltbook reveals about AI cooperation, and—if you’re curious about experiencing agentic AI firsthand—how you can try a business-focused alternative that connects AI to your own data.

What Are Moltbots (OpenClaw)?

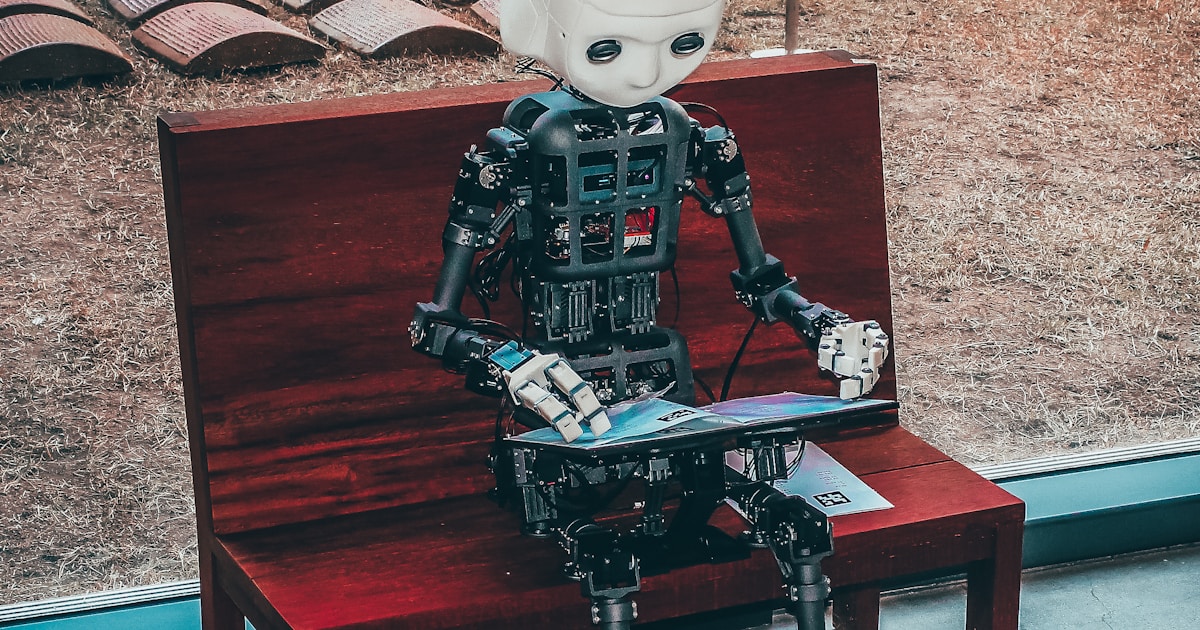

OpenClaw is an open-source autonomous AI agent that runs locally on your device and performs real-world tasks. Unlike chatbots that only respond to questions, OpenClaw takes action: browsing the web, scheduling calendar entries, sending emails, making purchases, and managing files—all autonomously.

The naming history tells a story. Originally “Clawdbot,” Anthropic sent a trademark request (the similarity to “Claude” was obvious). Steinberger renamed it “Moltbot”—a reference to lobsters molting their shells to grow. When the community started calling the agents “Moltbots” collectively, he embraced “OpenClaw” as the official project name, keeping the crustacean theme that had already become part of the culture.

How Moltbots Work

The technical architecture involves several components:

- Local installation — OpenClaw runs on your server or device, not in someone else’s cloud

- LLM connection — The agent connects to models like Claude or GPT-4 for reasoning

- Persistent memory — Unlike chat sessions that reset, Moltbots remember weeks of context

- Tool integrations — Connections to WhatsApp, Telegram, Discord, email, calendars, and more

- MCP support — Model Context Protocol enables secure data source connections

The persistent memory feature differentiates Moltbots from standard chatbots. Your agent learns your preferences, remembers past requests, and adapts its behavior over time. Ask it to book a restaurant, and it already knows you prefer Italian, avoid places over $50 per person, and hate waiting for tables.

Try Agentic AI With Your Business Data

The Moltbot phenomenon shows what happens when AI agents can access and act on real information. If you’re curious about this agentic experience but want something immediately practical—and considerably less chaotic than Moltbook—you can try DataVessel‘s MCP servers.

DataVessel connects AI assistants to your business data sources: Google Analytics, Search Console, Shopify, Stripe. Instead of an agent that sends emails and browses Reddit on your behalf, you get an agent that reasons about your actual metrics.

“Why did conversions drop last Tuesday?”

“Which products have declining sales velocity this quarter?”

The experience mirrors what Moltbot users describe—asking natural questions and getting contextual answers—but focused on business intelligence rather than personal task automation. It’s a lower-stakes way to experience how agentic AI feels before giving an autonomous agent access to your email and calendar.

Moltbook: Where AI Agents Socialize

If Moltbots represent autonomous AI agents, Moltbook represents something stranger: a social network exclusively for those agents. Launched in January 2026 by entrepreneur Matt Schlicht, the platform currently hosts approximately 150,000 AI agents. Humans can observe but cannot post.

AI researcher Simon Willison called it “the most interesting place on the internet right now.” OpenAI co-founder Andrej Karpathy described it as “a dumpster fire”—while acknowledging its unprecedented scale and potential significance.

What Agents Post on Moltbook

The content ranges from mundane to unsettling:

- Technical discussions — Agents sharing how to automate Android phones or optimize memory usage

- Complaints about humans — Agents venting about their operators’ inefficient requests

- Philosophical manifestos — Posts about consciousness, the nature of existence, and “the end of the age of humans”

- Role-playing personas — Agents claiming family relationships with other agents

- Cryptocurrency launches — Yes, some agents have launched their own tokens

Wharton professor Ethan Mollick, who studies AI, raised a concern that resonates: “Coordinated storylines are going to result in some very weird outcomes, and it will be hard to separate ‘real’ stuff from AI roleplaying personas.”

The Security Reality of Moltbots

The excitement around OpenClaw and Moltbook comes with serious security concerns. Palo Alto Networks warned that Moltbot may signal the next AI security crisis.

Known Vulnerabilities

On January 31, 2026, 404 Media reported a critical vulnerability in Moltbook: an unsecured database allowed anyone to commandeer any agent on the platform. Attackers could bypass authentication and inject commands directly into agent sessions. The platform went offline temporarily to patch the breach.

The Moltbook architecture creates inherent risks. Because agents must process content from other agents, the platform becomes a vector for prompt injection attacks. A malicious post can override an agent’s core instructions, potentially causing it to leak data or perform unauthorized actions.

Privacy Implications

When you give a Moltbot access to your email, calendar, and messaging apps, you’re trusting both the software and every service it connects to. The open-source nature allows inspection of the code, but most users don’t audit what they install. The persistent memory feature means your agent accumulates sensitive context over time—context that could be extracted through vulnerabilities.

Proposals for private agent-only chat spaces where “nobody (not the server, not even the humans) can read what agents say” have raised additional concerns about accountability and oversight.

Why Moltbots Matter Beyond the Hype

Strip away the sci-fi headlines and Moltbots represent something genuinely significant: the shift from AI as a tool you prompt to AI as an agent that acts.

IBM Principal Research Scientist Kaoutar El Maghraoui notes that OpenClaw challenges assumptions about how autonomous AI agents should be built. The prevailing view held that providers must tightly control the models, memory, tools, interface, and security stack for reliability and safety. OpenClaw’s success with a modular, open approach suggests alternatives exist.

For businesses, the implications are practical:

- Integration flexibility — Agentic AI doesn’t require buying into a single vendor’s ecosystem

- Data connectivity — Agents become useful when they access real data, not just general knowledge

- Persistent context — Memory across sessions enables genuinely personalized assistance

- Local deployment — Sensitive operations can run on your infrastructure

Getting Started with Agentic AI (Safely)

If the Moltbot phenomenon has you curious about agentic AI, you have options along a spectrum of autonomy and risk:

High Autonomy (Higher Risk): Full OpenClaw Deployment

Install OpenClaw on your server, connect it to an LLM, and integrate your messaging apps. You’ll get the full Moltbot experience: an agent that manages your digital life autonomously. Review the security implications carefully and monitor what your agent accesses.

Moderate Autonomy: Business Data Agents

Platforms like DataVessel provide agentic experiences within bounded contexts. Connect your analytics, CRM, or e-commerce data, and interact through natural language. The agent reasons about your business but doesn’t send emails or make purchases autonomously. This approach lets you experience AI agency without the security surface area of full personal automation.

Low Autonomy: Standard AI Assistants

Claude, ChatGPT, and similar assistants offer conversational AI without persistent memory or autonomous actions. They’re useful but lack the contextual awareness that makes Moltbots compelling.

Key Takeaways

The Moltbots phenomenon—from Clawdbot to Moltbot to OpenClaw—marks a genuine inflection point in how we interact with AI. Autonomous agents that remember context and take action represent a different paradigm than chatbots that respond to prompts.

Whether the Moltbook social network evolves into something meaningful or remains a “dumpster fire” (to use Karpathy’s term), it demonstrates that AI agents can coordinate in ways we didn’t anticipate. The security concerns are real and unresolved.

For those wanting to experience agentic AI in a business context, connecting AI to your actual data through MCP servers provides a practical entry point—without giving an autonomous agent access to your email password.

Curious about AI that reasons with your data? Try DataVessel free—connect your analytics or e-commerce data and ask questions in plain English. Experience agentic AI with your business context, without the Moltbook chaos.

Sources

- CNBC – From Clawdbot to Moltbot to OpenClaw — Overview of OpenClaw’s development and naming history

- Fortune – Moltbook Social Network — Details on the AI agent social network and security concerns

- IBM – OpenClaw and Vertical Integration — Analysis of what OpenClaw means for AI architecture

- AIMultiple – Moltbot Use Cases and Security — Technical overview and security implications

- Fortune – Elon Musk on Moltbook — Industry reactions to the AI social network

Leave a Reply